It has been a week since the axios compromise, and we wanted to take some time to reflect. We published a detailed technical analysis during the incident and have been continuously updating it. But the human story, the frantic evening, the deleted GitHub issues, the community rallying together at midnight, that part hasn't been told yet. This is that story.

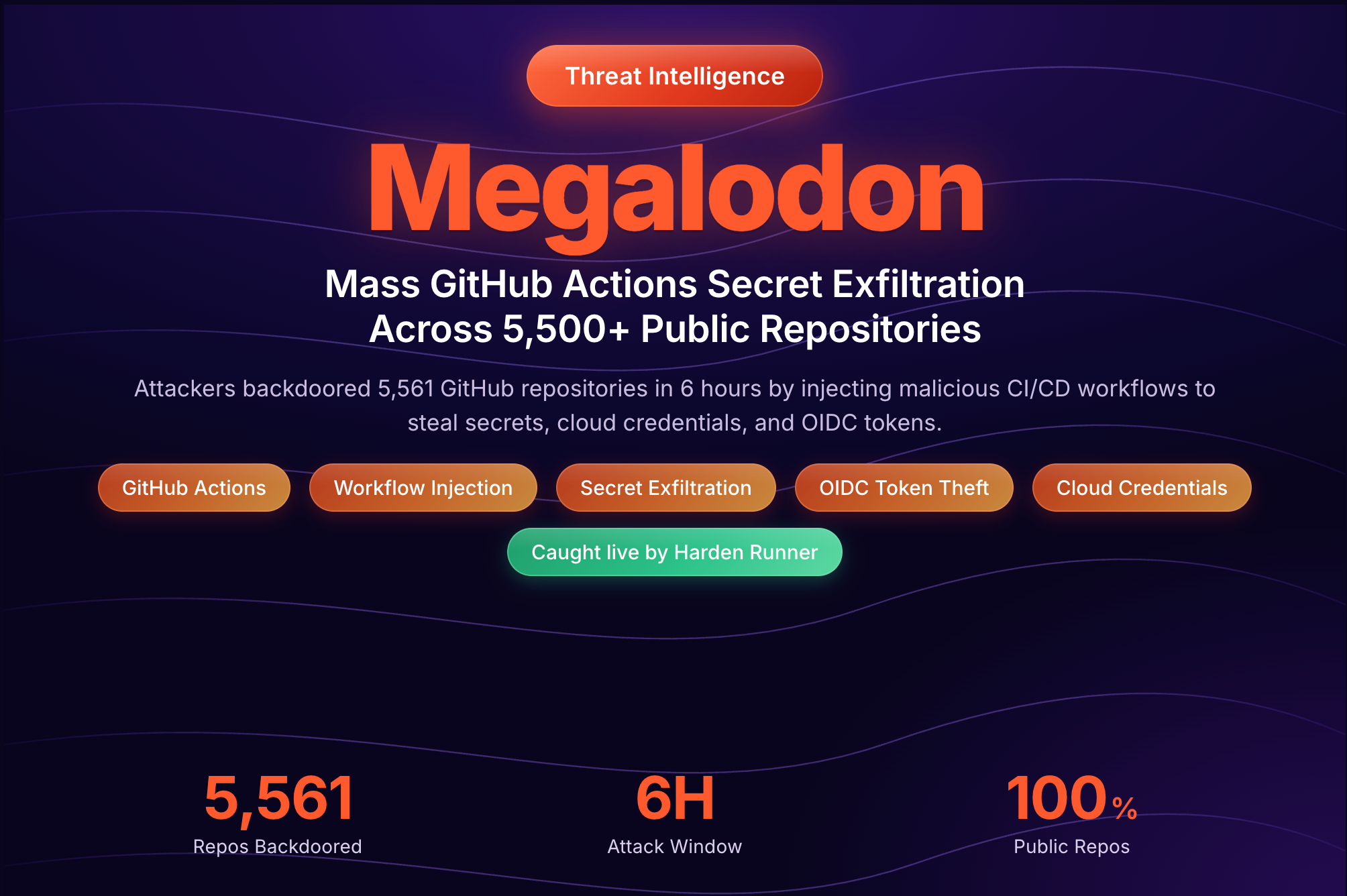

axios is the most popular HTTP client in the JavaScript ecosystem, with over 100 million weekly downloads, more than 17 million repositories and 240 thousand packages as dependents. By download count, this is the largest compromise of a single package in npm history. A state-sponsored threat actor hijacked it, and when we created a GitHub issue to warn the community, they deleted it. We created it again. They deleted it again. This happened about 20 times. This was not a random opportunist. It was a coordinated, state-sponsored operation specifically targeting one of the most widely-used packages in the entire npm ecosystem. This is the behind-the-scenes story of how it all unfolded.

The Alert

It was a Monday evening in Seattle. After a stretch of responding to high-profile supply chain attacks like TeamPCP/Trivy, Checkmarx KICS, and LiteLLM, we were hoping for a quiet night.

That didn't happen.

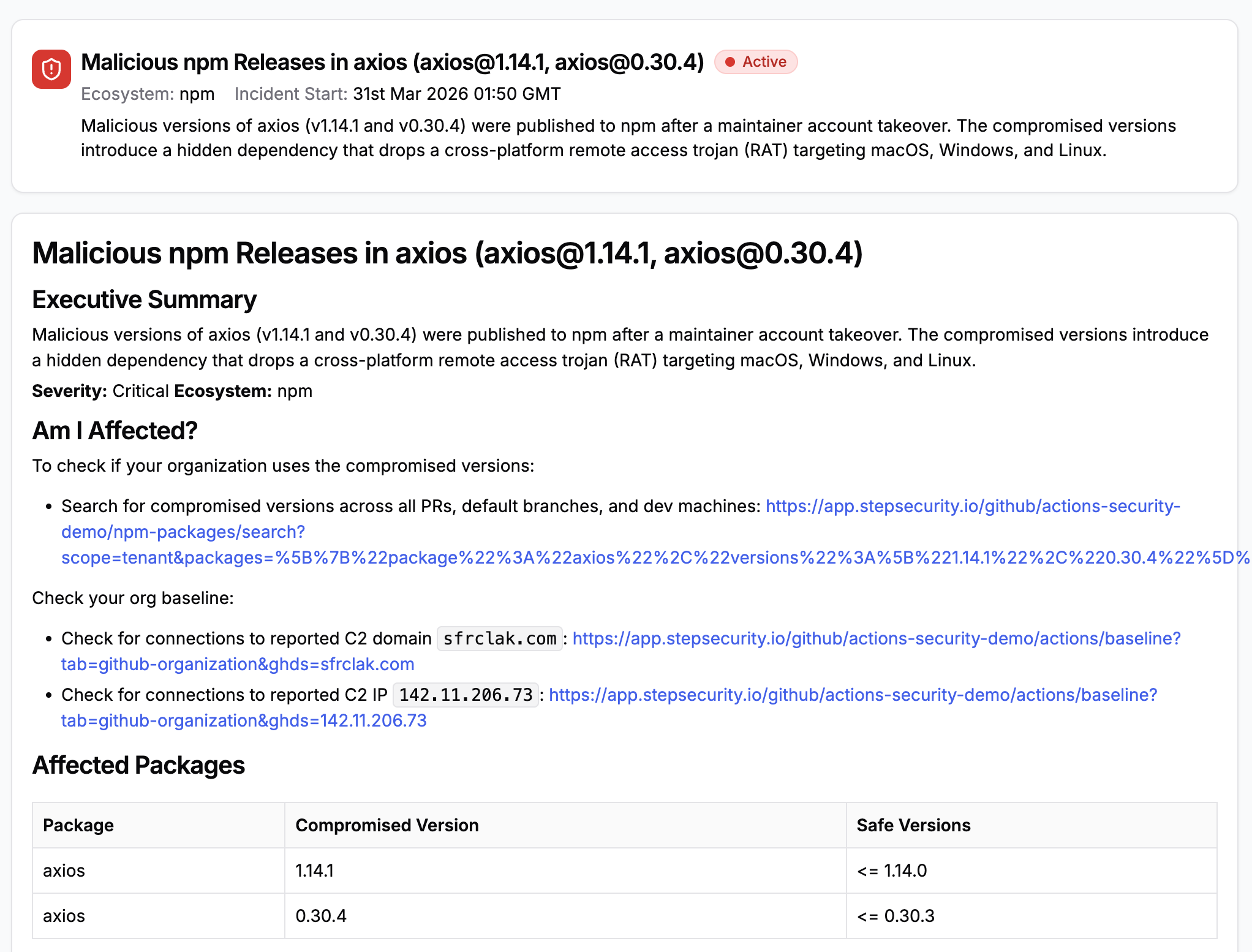

As soon as the compromised axios package was published to npm, StepSecurity's AI Package Analyst analyzed it in real time and returned a verdict of critical / rejected. The conclusion was unambiguous: this was a malicious version of the legitimate axios library, showing clear signs of a supply chain compromise through package hijacking. The automated analysis flagged six distinct suspicious indicators, such as an undocumented and unused dependency (plain-crypto-js) that was never imported anywhere in the source code, a version mismatch between package.json and internal version data, a missing CHANGELOG entry, and dropped provenance attestation.

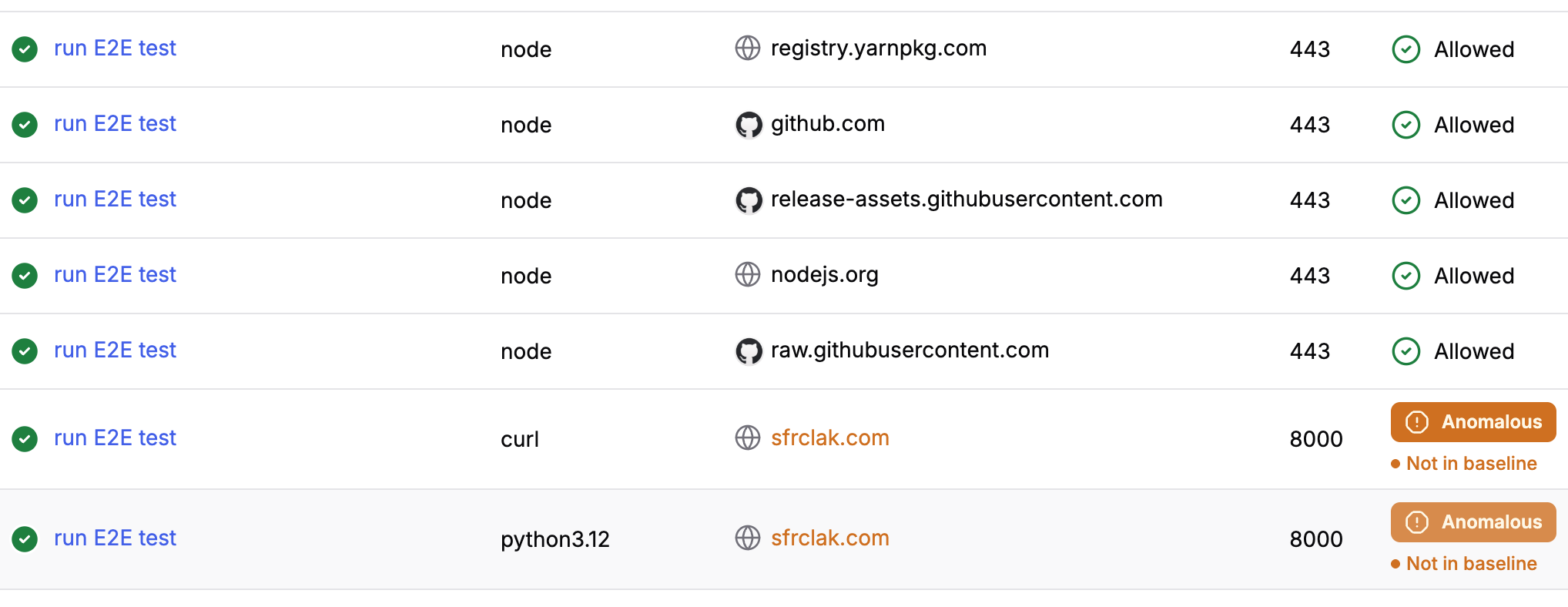

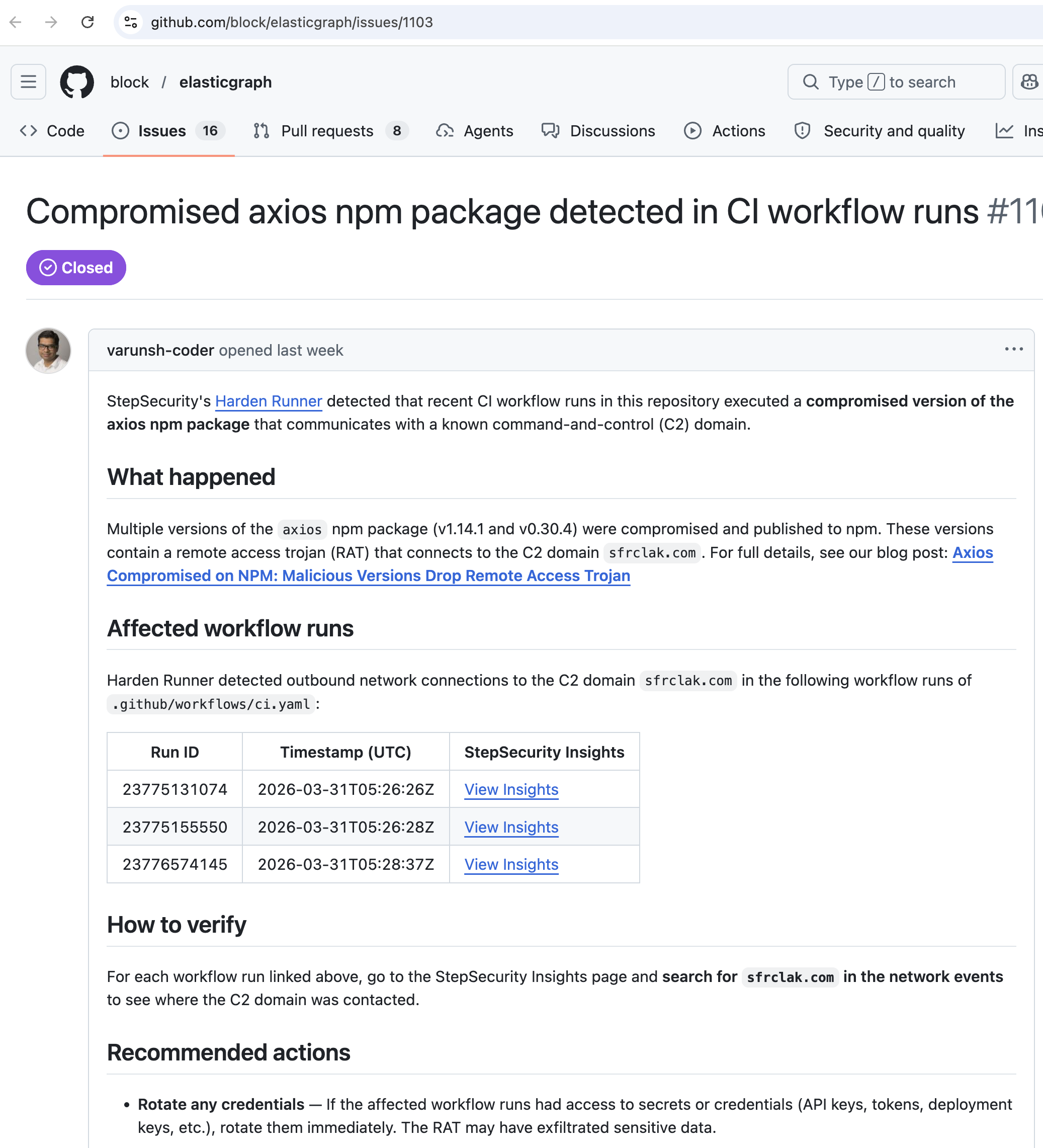

StepSecurity Harden-Runner, our purpose-built endpoint detection and response (EDR) agent for GitHub Actions, independently confirmed the analysis. Harden-Runner detected an anomalous outbound network call to the C2 domain sfrclak.com from CI/CD runners that had installed the compromised package. The network-level detection from Harden-Runner, combined with the static analysis from AI Package Analyst, gave us high confidence in the verdict before any human had reviewed the code.

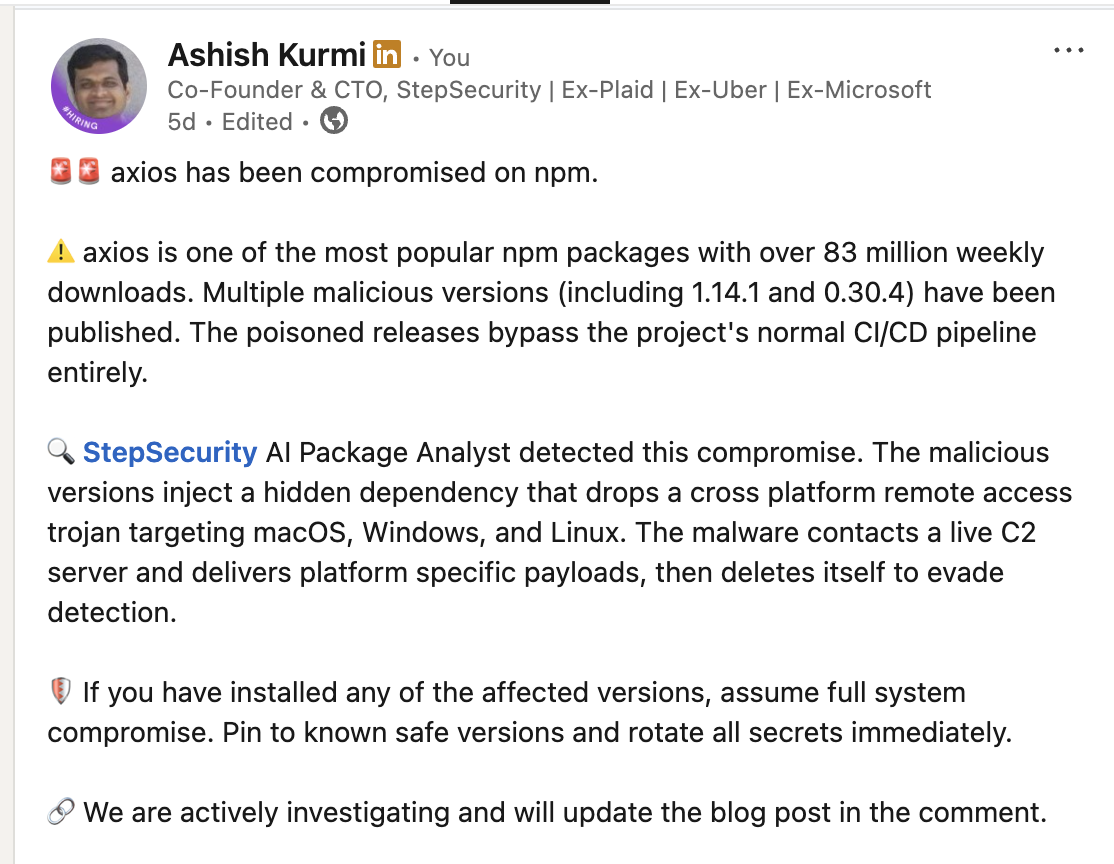

As part of our threat intelligence capability, StepSecurity operates a 24x7 SOC with PagerDuty integrations for critical detections. Ashish, our co-founder and CTO, was on call that night and was the first to get paged. Within minutes, cross-referencing the AI Package Analyst findings, Harden-Runner network alerts, and a quick manual code review, he confirmed what the automated systems were telling us: axios was compromised.

StepSecurity maintains over 250 GitHub Actions, primarily authored in Node.js, so we knew firsthand how ubiquitous axios is. The severity of this was immediately clear.

Ashish sent an urgent message to our general Slack channel sharing his assessment and started the incident bridge call.

I was about to head out for a grocery run when Ashish's message hit our Slack channel. I dropped everything and rushed back to join the bridge call. Our customer support team on the US East Coast was wrapping up their day. Our teammates in India were about to start theirs. Within minutes, multiple team members from across time zones joined the call.

Verifying the Unknown

Before sounding the alarm publicly, we wanted to be responsible. We had a detection and high confidence in its accuracy, but before telling the world that one of the most widely-used npm packages had been compromised, we needed to verify that we were not duplicating someone else's work or missing context that could change the picture.

We checked LinkedIn. We checked X. We searched the blogs of other software supply chain security companies. We looked for any posts, threads, or vendor alerts that might indicate someone had already flagged this. We found nothing.

As far as we could tell, nobody had published about the compromise yet, and the malicious versions were still available on npm for anyone running npm install to pull down. Every minute that passed, more developers and CI/CD pipelines were pulling in a package that would silently install a remote access trojan on their machines. With no one else raising the flag, we needed to act.

Sounding the Alarm

We moved on multiple fronts simultaneously. We published a threat center alert and sent notifications to our enterprise customers via Slack, Web hook and S3 integration to notify their SOC team, so they could immediately assess their own exposure. This is part of our standard incident response workflow: when our SOC detects a critical supply chain compromise, our enterprise customers are the first to know.

We published a blog post with a summary of our findings, including the compromised versions (1.14.1 and 0.30.4), the injected plain-crypto-js dependency, and the C2 domain. Ashish posted an advisory on LinkedIn breaking down the key indicators of compromise so that developers scrolling their feeds would see the warning.

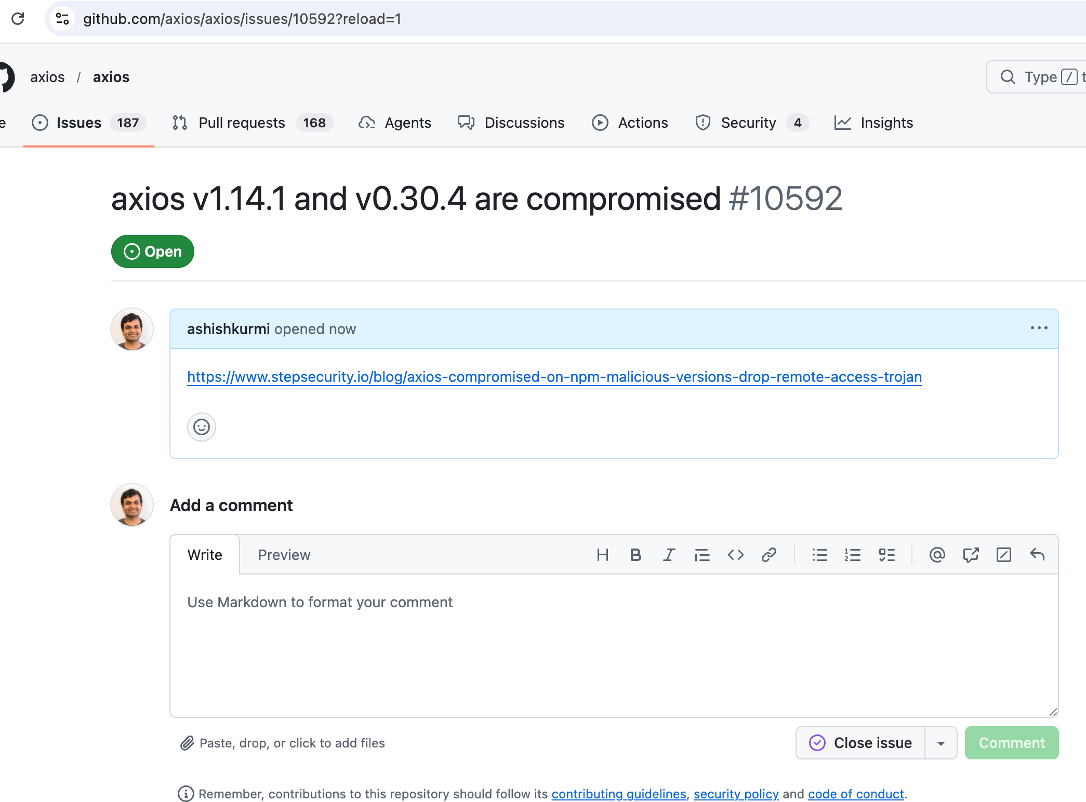

Then Ashish turned to the axios GitHub repository and created an issue to work with the maintainers to alert the community.

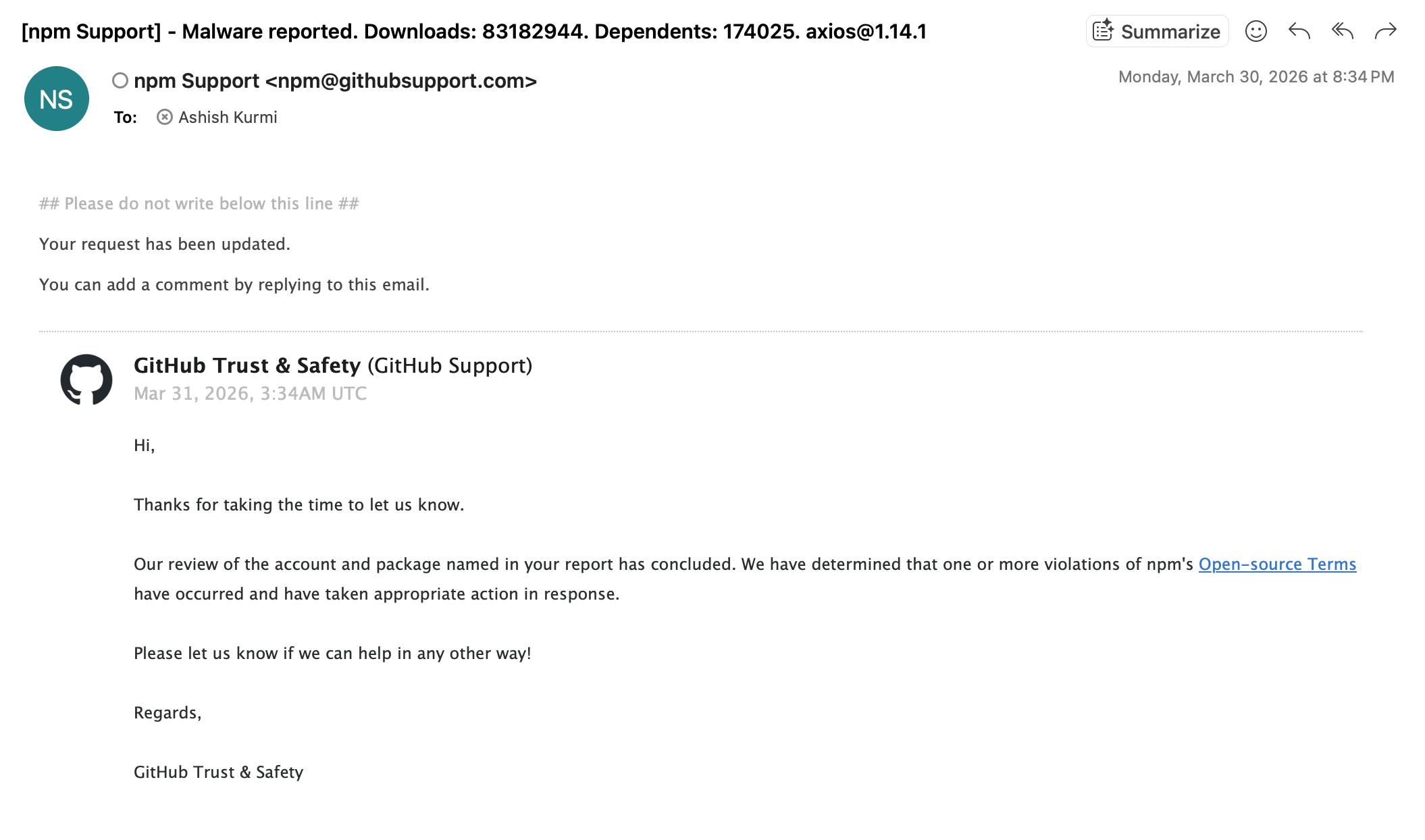

He also reported the compromised packages as malware to npm, flagging both axios@1.14.1 and axios@0.30.4 along with the malicious plain-crypto-js dependency.

The Threat Actor Strikes Back

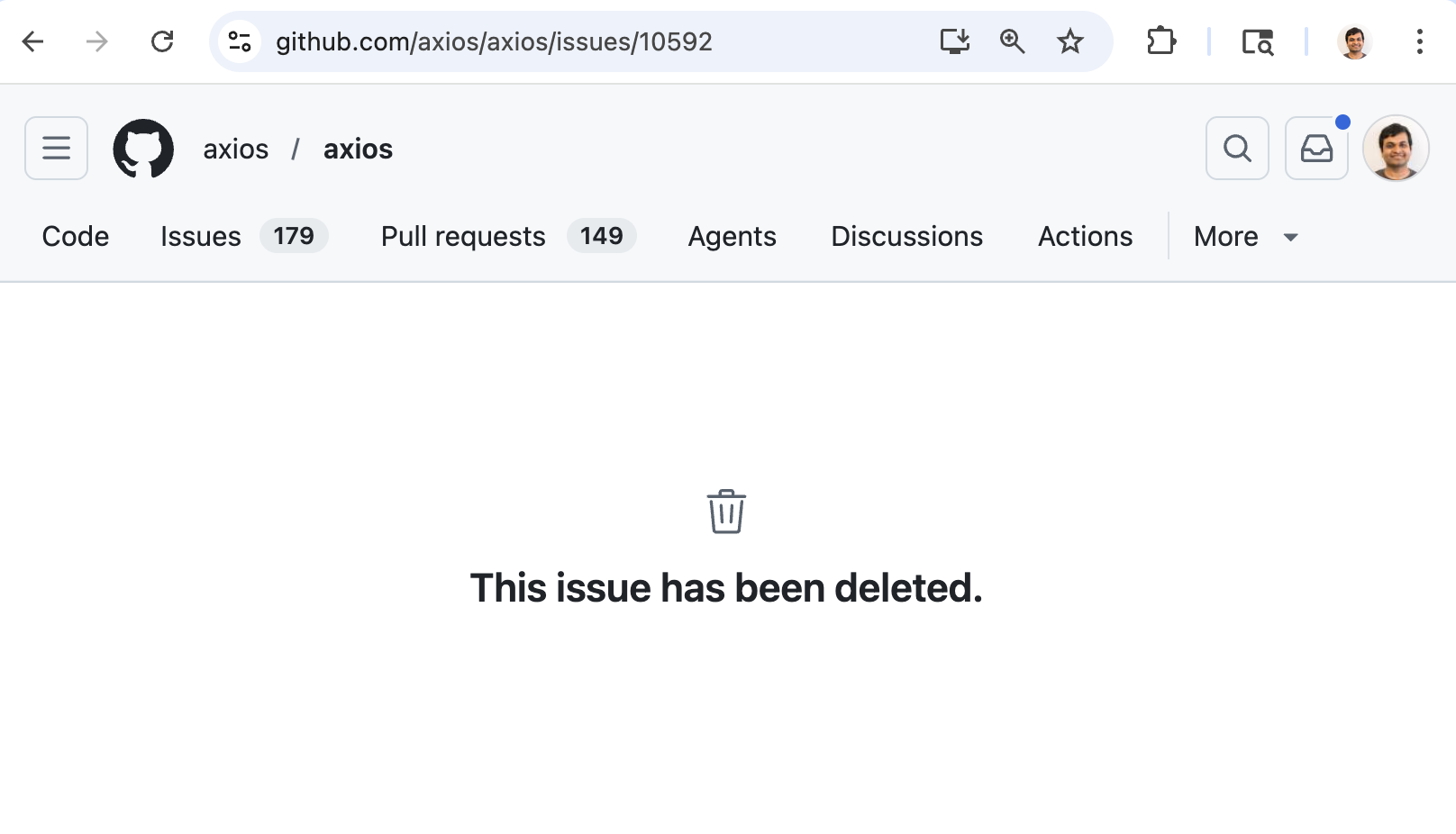

Then something we didn't expect happened. When Ashish went back to the GitHub repository to check on the issue he had created, it was gone. Deleted. No trace of it.

The threat actor had compromised not just the maintainer's npm account, but their GitHub account as well. And they were actively monitoring the repository, using the compromised account to silently delete every issue that tried to warn the community about what was happening.

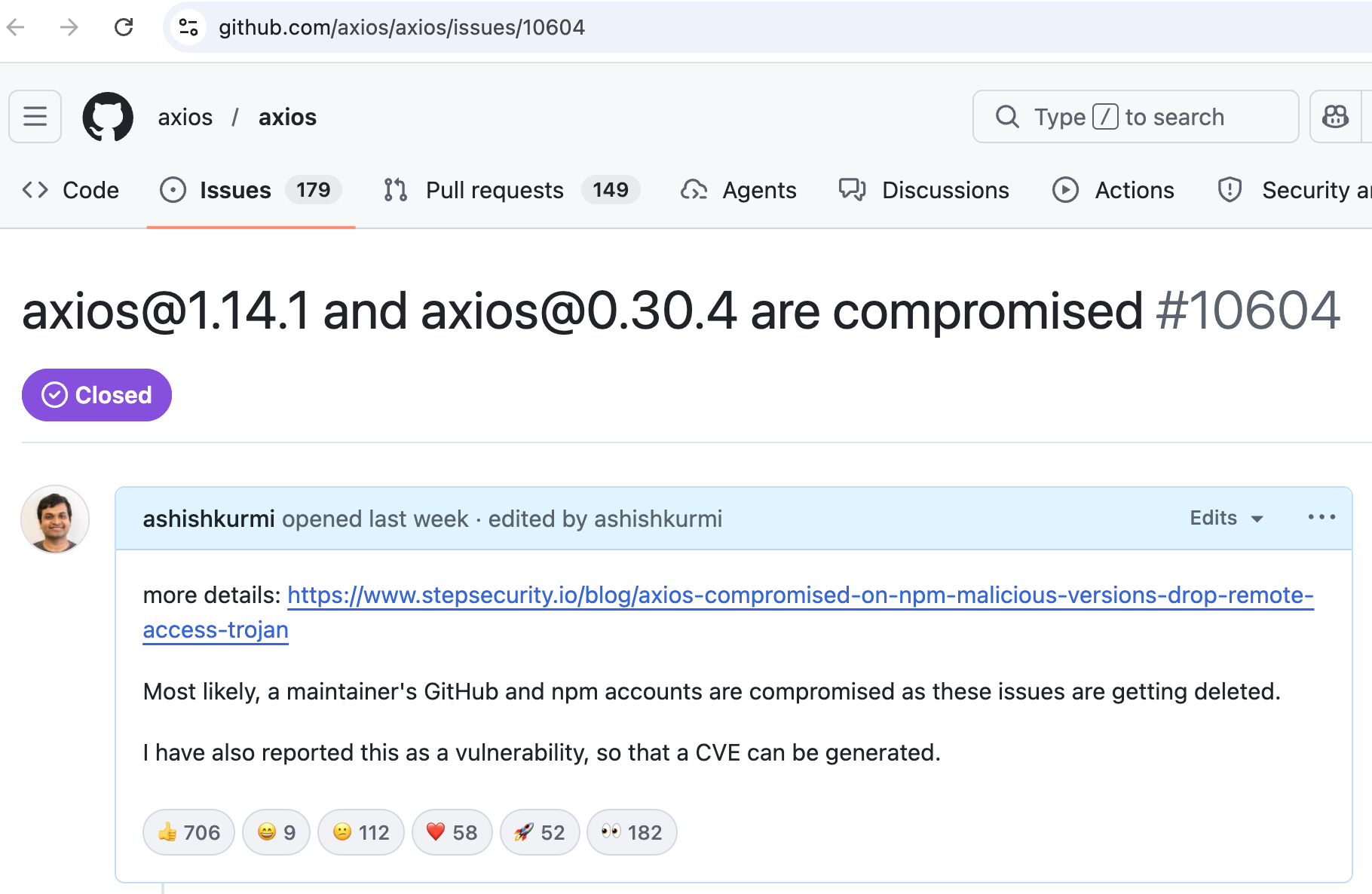

Ashish created the issue again. Community members who had also noticed something was wrong immediately began commenting on it, sharing their own findings, asking questions, and confirming the compromise from their own analysis. Within minutes, the issue was deleted again.

So he created it again. And again. And again.

Every single time Ashish created a GitHub issue, community members would jump in with comments. Every single time, the adversary would use the compromised maintainer's account to wipe it. Each deletion confirmed what we had suspected: the attacker didn't just have the npm token, they had full control of the maintainer's GitHub account and were actively monitoring the repository.

This happened approximately 20 times. It was a real-time tug of war between the community trying to warn developers and the threat actor trying to keep the compromise hidden.

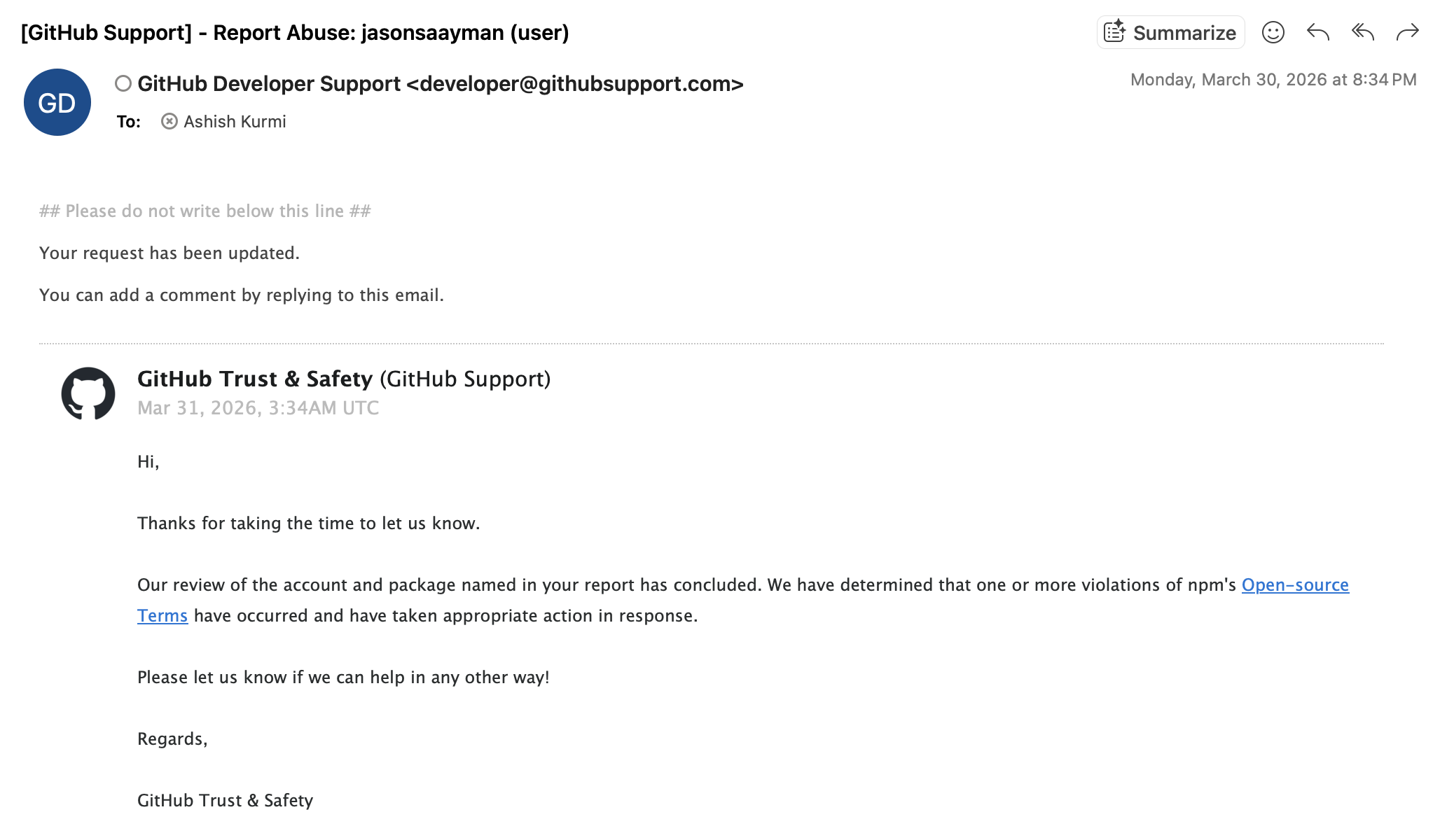

While this cat-and-mouse game played out on GitHub, Ashish also raised a GitHub account abuse ticket, explaining that the maintainer's account appeared to be compromised and was being used to delete legitimate security advisories. Both GitHub and npm support acted swiftly. GitHub suspended the compromised maintainer's account, stopping the issue deletions and preventing any further malicious actions from that account.

npm removed the malicious packages from the registry and placed security holds on the attacker's auxiliary packages.

Once the compromised account was suspended, GitHub issue #10604 finally stuck. It became the central thread for the community's recovery effort, eventually garnering over 1100 reactions and more than 800 comments from developers around the world sharing indicators of compromise, remediation steps, and their own forensic findings.

Working With the Community

With the immediate threat contained, we organized into sub-teams to handle the next phase of the response. One team focused on direct support for StepSecurity enterprise customers. Another team dived deeper into the technical forensics and documenting the full attack chain. A third team turned its attention to the broader open-source community.

StepSecurity Harden-Runner's community tier is free for open-source projects, and it is currently used by more than 12,000 open-source projects. Harden-Runner insights are public for community-tier users by design, so that anyone can view them without requiring a login. Using these public insights, we discovered that several open-source projects had installed the compromised package during the attack window. We proactively created GitHub issues in affected repositories to notify their maintainers, linking to the relevant Harden-Runner insights pages so they could see the evidence for themselves.

We were getting questions from the community across multiple channels: GitHub, LinkedIn, direct messages, and email. Developers wanted to know if they were affected, what the indicators of compromise were, whether deleting node_modules and reinstalling was sufficient (it was not).

At around midnight Pacific Time, I suggested we host a community call on Wednesday at 10 AM PT and set up a webinar registration page. I honestly wasn't sure how many people would show up on that kind of notice. To our genuine surprise, the response was overwhelming. 472 people had registered and on Wednesday morning, 200 community members attended the call live.

The call was something special. It was not just us presenting findings. It was the community sharing, questioning, and helping each other in real time. Attendees raised insightful questions about whether secondary sleeper attacks could follow from credentials exfiltrated in earlier supply chain incidents. One community member drew a parallel to the Shai-Hulud campaign and asked whether attackers sitting on stolen credentials might be waiting patiently before striking, "like the shapeshifters in Dune 3." Someone asked about the tension between cooldown policies and CVE-driven urgency: if a new package version patches a critical vulnerability, how do you balance the need to update quickly with the risk that the patch itself could be compromised? Another attendee asked whether the C2 server may have served different RAT variants based on the device fingerprinting data the first-stage payload was collecting. A developer shared that their Azure Kubernetes cluster had installed the malicious version but their firewall had blocked the C2 domain, and wanted to know if that was sufficient. Community members pointed out additional C2 infrastructure from a Wiz article that we had not seen yet, and we updated our blog post with the new IOCs as a result.

We ourselves learned several things from that conversation that improved our understanding of the incident. The webinar reminded us that the best security research is collaborative. You can watch the full recording on YouTube.

Throughout the incident, we continuously updated our blog post as our understanding deepened. It became a living document, evolving with every new finding the community shared with us, from additional IOCs to remediation guidance.

The Ripple Effect

It was gratifying, and humbling, to see the broader community come together so quickly around this incident.

On GitHub, issue #10604 became the de facto coordination hub for the response. Community members contributed forensic details, shared detection scripts, asked and answered questions about remediation, and helped each other assess their exposure. The axios project's other collaborators worked around the clock to restore trust in the package once the compromised account was suspended, publishing clean versions and communicating transparently with their user base.

It was great to see other software supply chain security companies raise awareness about the issue and contribute their own analysis. Datadog, Aikido, Wiz, Endor Labs, SafeDep, Socket, Huntress, Malwarebytes, Sophos, and SANS all published detailed write-ups and advisories that helped amplify the alert across the ecosystem. This is how the security community should work: everyone contributing what they can, when they can. No single company has complete visibility, and the faster information flows, the faster the ecosystem can respond.

Media Coverage

We are really grateful for the press coverage StepSecurity received during this incident. The scale of the axios attack meant it reached well beyond the security community and into mainstream technology coverage. Bloomberg covered the incident, bringing it to the attention of enterprise leadership and boards of directors who may not follow npm advisories but do follow financial news. Dark Reading published a detailed analysis. TechCrunch covered the North Korean attribution angle. CSO Online called it the highest-impact npm supply chain attack on record.

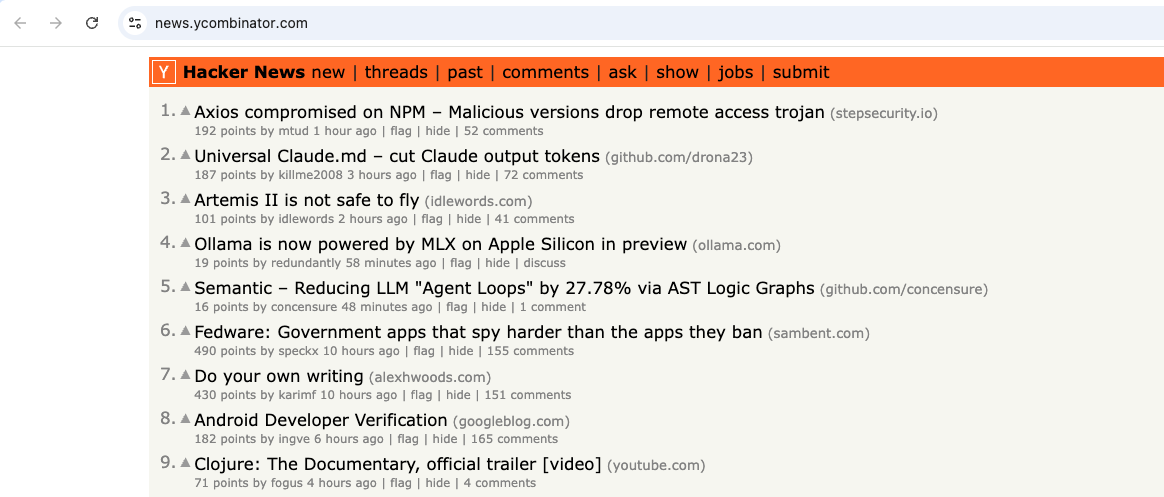

The Hacker News, Bleeping Computer, The Register, Help Net Security, SC Magazine, VentureBeat, and InfoQ all published their own coverage. Our blog post reached #1 on Hacker News, where it sat at the top of the front page for hours. On the threat intelligence side, Google's Threat Intelligence Group, Microsoft Security, Palo Alto Unit 42, Cisco Talos, Elastic Security Labs, and Datadog Security Labs published in-depth technical analyses and detection guidance.

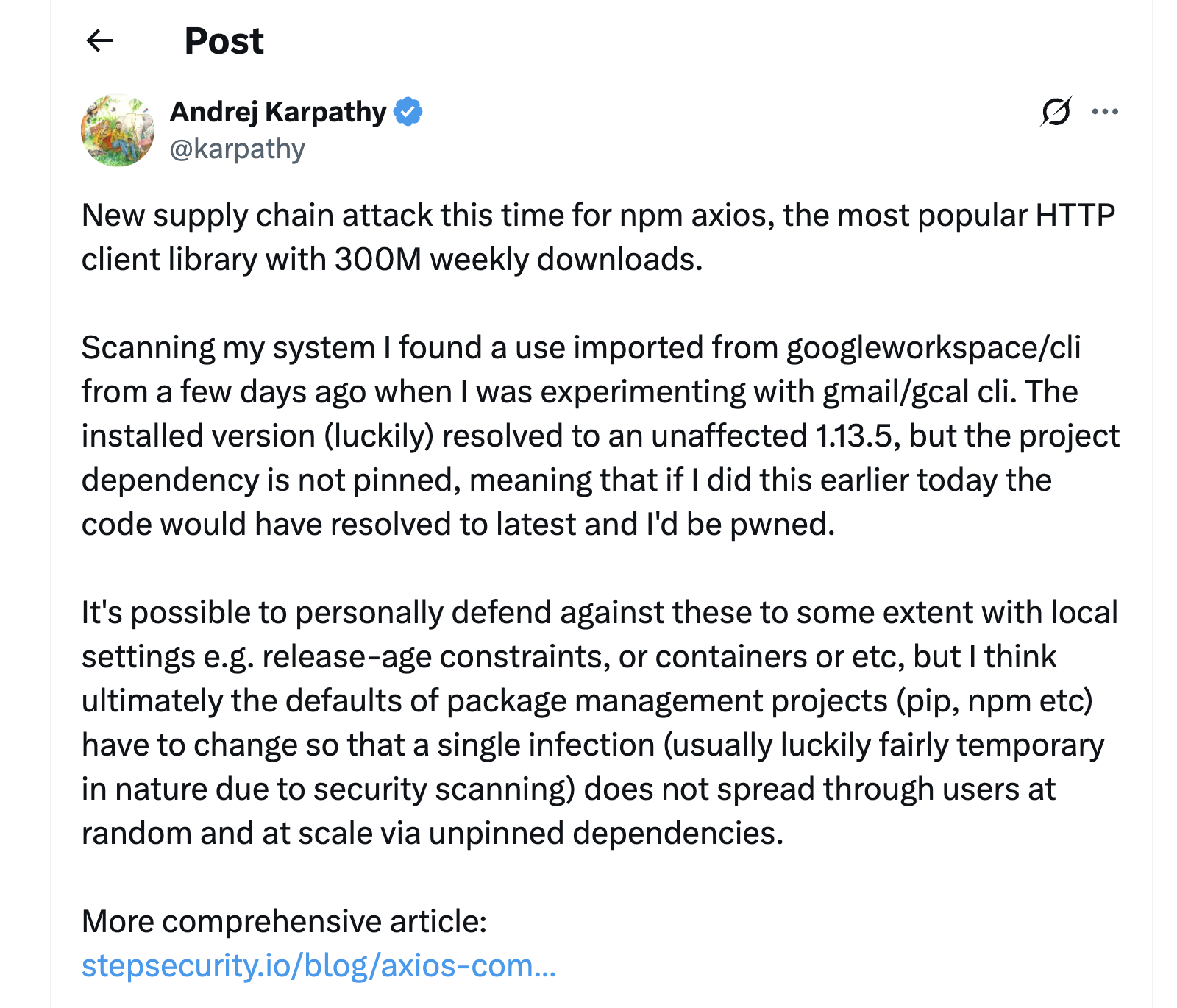

Andrej Karpathy shared our blog on X. Fireship, the popular YouTube channel with over 4 million subscribers, featured our technical analysis in their coverage of the incident. Moments like these remind a small team like ours that the work matters.

A Community Confirmation

In the days following the incident, the compromised maintainer themselves posted a detailed comment on GitHub issue #10604 confirming what had happened. They had been targeted by a highly sophisticated social engineering campaign. An individual approached them posing as a founder of a legitimate company and initiated what appeared to be a normal open-source collaboration opportunity. The attacker created a fake Slack workspace branded to the supposed company, set up CI infrastructure to make the collaboration appear genuine, and scheduled a video call on Microsoft Teams. During the call, they used a fake error message to trick the maintainer into downloading a "fix" that turned out to be a remote access trojan.

Through this RAT, the attacker gained access to the maintainer's machine and stole a long-lived npm access token. This token allowed them to publish the compromised axios versions directly, bypassing the project's OIDC-based publishing controls that would have otherwise required the publish to originate from a trusted CI/CD environment. The attacker also gained access to the maintainer's GitHub account, which they used to delete the issues we were creating to warn the community.

Google's Threat Intelligence Group subsequently attributed the attack to North Korean threat actor UNC1069. Microsoft Threat Intelligence independently attributed it to Sapphire Sleet, their name for the same North Korean state-sponsored group. This was not a random opportunist. It was a coordinated, state-sponsored operation specifically targeting one of the most widely-used packages in the entire npm ecosystem.

The maintainer's willingness to share exactly what happened takes real courage. Social engineering campaigns of this sophistication can fool anyone. By being transparent about the attack vector, they helped the entire open-source community understand the threat and take steps to protect themselves. We have enormous respect for that.

The Bigger Picture

The axios compromise did not happen in isolation. Over the past year, our team has detected and responded to a series of major supply chain attacks, including tj-actions/changed-files, Shai-Hulud (780+ npm packages), TeamPCP/Trivy, Checkmarx KICS, and LiteLLM. The frequency is accelerating, and the axios attack came right on the heels of three other major incidents in March 2026 alone.

Each of these attacks shows increasing sophistication. The axios attack, with its pre-staged decoy dependency, its precisely timed publishing across both current and legacy branches, its device fingerprinting and platform-specific RAT delivery, and its active real-time suppression of community warnings by deleting GitHub issues, represents a level of operational tradecraft that should give every engineering organization pause.

These attacks share a common pattern. They target the trust relationships that make open source work: maintainer credentials, publishing tokens, CI/CD pipelines, the assumption that a new version of a package you already depend on is safe to install. And they are increasingly attributed to state-sponsored actors with significant resources, patience, and operational security.

We are grateful to the axios maintainers for their transparency and resilience. We are grateful to every community member who commented on the GitHub issue, joined our community call, shared their findings, and helped others understand whether they were affected. We are grateful to GitHub and npm for acting quickly to suspend the compromised accounts and remove the malicious packages. And we are grateful to the broader security community, the researchers, the vendors, the journalists, the developers who took the time to spread the word, for making the ecosystem's response to this incident as fast and coordinated as it was.

Supply chain attacks are not slowing down. If anything, the pace is accelerating, and the adversaries are getting better. The question is not whether the next one will happen, but whether we will be ready when it does.

When I think about this past week, what stays with me is the image of our small team, spread across time zones, going up against a state-sponsored threat actor in real time. I am incredibly proud of what this team pulled off. At StepSecurity, we will keep building, keep detecting, and keep showing up when it matters most.