AI coding agents like Claude Code, Cursor, Codex, Gemini, and GitHub Copilot are now standard tools across engineering organizations. They write code, resolve dependencies, interact with external services, and ship changes through your entire development pipeline — often with minimal human oversight.

The security challenge this creates goes far beyond AI-generated code quality. It spans the entire software development lifecycle, from the development environment where an AI coding agent first runs, through the code repository where it pushes changes, to the CI/CD pipeline where it builds and deploys.

Recent attacks prove this isn't theoretical. The Shai-Hulud "Second Coming" campaign compromised npm packages that executed on developer machines, stole credentials, and weaponized AI CLI tools for reconnaissance. The NX Build System compromise hit developers through a VSCode extension that silently ran malicious code at activation. And the Trust Wallet incident showed how stolen developer credentials led to a malicious browser extension published to the Chrome Web Store, impacting thousands of their users.

These attacks share a common thread: they target the stages of development where AI coding agents now operate.

.png)

The Agentic Software Development Flow & Why Every Stage Is an Attack Surface

Agentic software development follows a three-stage flow, and each stage introduces a distinct attack surface.

Stage 1: AI Coding Agent on Developer Machine or Ephemeral Dev Environment

This is where vibe coding begins. An AI coding agent receives a natural language prompt and starts building. Whether running locally or in an ephemeral cloud environment, it operates with access to credentials, SSH keys, cloud tokens, and source code.

IDE extensions can be compromised (the NX Console VSCode extension silently ran malicious code at activation). MCP servers connecting AI agents to development tools lack authentication standards. Rules File Backdoor attacks can poison agent behavior invisibly. And when credential theft occurs at this stage, the consequences cascade as the Trust Wallet incident demonstrated, stolen developer credentials enabled attackers to publish a malicious release that bypassed the organization's internal approval process entirely.

Stage 2: Code Repository

AI coding agents dynamically resolve and install packages based on task analysis, and those dependencies enter the codebase through pull requests. The agent optimizes for task completion, not supply chain risk assessment. When a supply chain incident breaks, security teams need to instantly answer: where is this package across my entire organization?

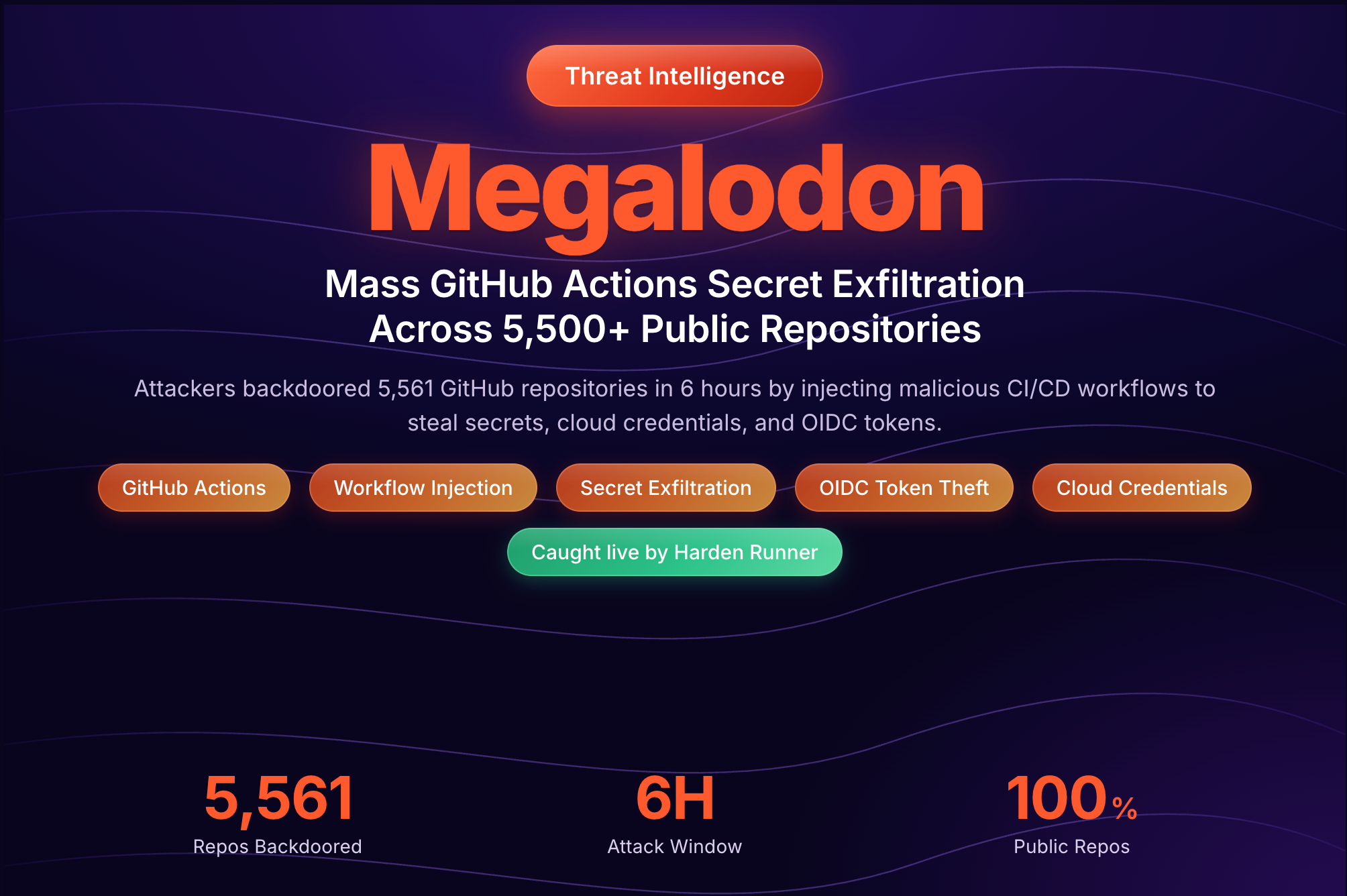

Stage 3: CI/CD Pipeline

All coding agents such as Claude Code, Codex, and GitHub Copilot now operate directly inside GitHub Actions with GITHUB_TOKEN privileges; creating branches, pushing commits, installing dependencies, and interacting with GitHub APIs autonomously. CI/CD pipelines have privileged access to production secrets and infrastructure, yet security teams face a critical visibility gap: you can't see what processes an AI coding agent spawns, what endpoints it contacts, or what packages it installs at runtime.

.png)

Why Existing Vibe Coding Security Solutions Fall Short

A growing number of security vendors are positioning around "vibe coding security," but almost all focus on a single stage of the agentic development lifecycle.

IDE-layer solutions scan AI-generated code or govern plugins but provide zero visibility into CI/CD pipelines or npm supply chain attacks at scale. SAST/DAST tools scan code output but don't monitor agent runtime behavior. EDR/XDR lacks CI/CD context. CNAPP/CSPM is built for cloud infrastructure, not ephemeral runners or developer workstations.

The core gap: no single point solution provides visibility and enforcement across developer machines, code repositories, and CI/CD pipelines simultaneously. Attackers know this, the Shai-Hulud campaign exploited all three stages in a single operation.

.png)

Securing Vibe Coding with StepSecurity: End-to-End Defense

StepSecurity provides end-to-end defense across all three stages of the agentic software development lifecycle: AI coding agents on developer machines, code repositories, and CI/CD pipelines.

These capabilities are battle-tested. StepSecurity was the first to detect the tj-actions supply chain attack and several major supply chain attacks in 2025 providing customers with early warning before public advisories existed.

.png)

Stage 1: AI Coding Agent and Development Environment Security (Dev Machine Guard)

The development environment, where sensitive credentials live and untrusted code executes daily, has historically lacked purpose-built supply chain security controls. StepSecurity Dev Machine Guard fills this gap.

- AI Agent Discovery: Automatically discover and track all AI coding assistants across your organization.

- MCP Server Visibility: See which Model Context Protocol servers are connecting AI agents to development tools across your org.

- IDE Extension Governance: Track all installed extensions across VSCode and Cursor organization-wide. Implement allowlists and approval workflows.

- Local npm Package Monitoring: Monitor npm packages installed on developer machines, whether by a human or an AI coding agent. When a compromised package is detected, remotely remove it from affected machines across the entire org, containing the blast radius within minutes.

When an AI coding agent receives a prompt to "build a login system with OAuth," it may install packages, connect to MCP servers, and invoke IDE extensions; all before any code reaches a repository. If any of those components is compromised, the organization's credentials are at risk.

Stage 2: Code Repository Security (NPM Supply Chain Security)

AI coding agents dynamically resolve packages and push them into your codebase through pull requests, often without human review. StepSecurity's NPM Supply Chain Security capabilities provide proactive defense at this stage.

- Cooldown Policies: Block newly published npm package versions for a configurable period. Most supply chain attacks exploit fresh packages before the community can review them. Customers with cooldown policies enabled were protected from npm supply chain attacks in 2025 such as the Shai-Hulud campaigns even before public advisories existed.

- PR-Level Blocking: Automatically block pull requests that introduce known compromised or suspicious npm packages.

- Org-Wide Package Search: When an AI coding agent introduces an unfamiliar package, or when a supply chain incident breaks, you need to instantly find every instance of that package across all repositories, pull requests, and developer machines. StepSecurity's package search provides this in seconds.

- Historical Exposure Tracking: Even after a malicious package is removed from npm, track whether your organization was exposed during the window it was available which is critical for incident response.

Explore the interactive demo to see how npm Package Search works in action:

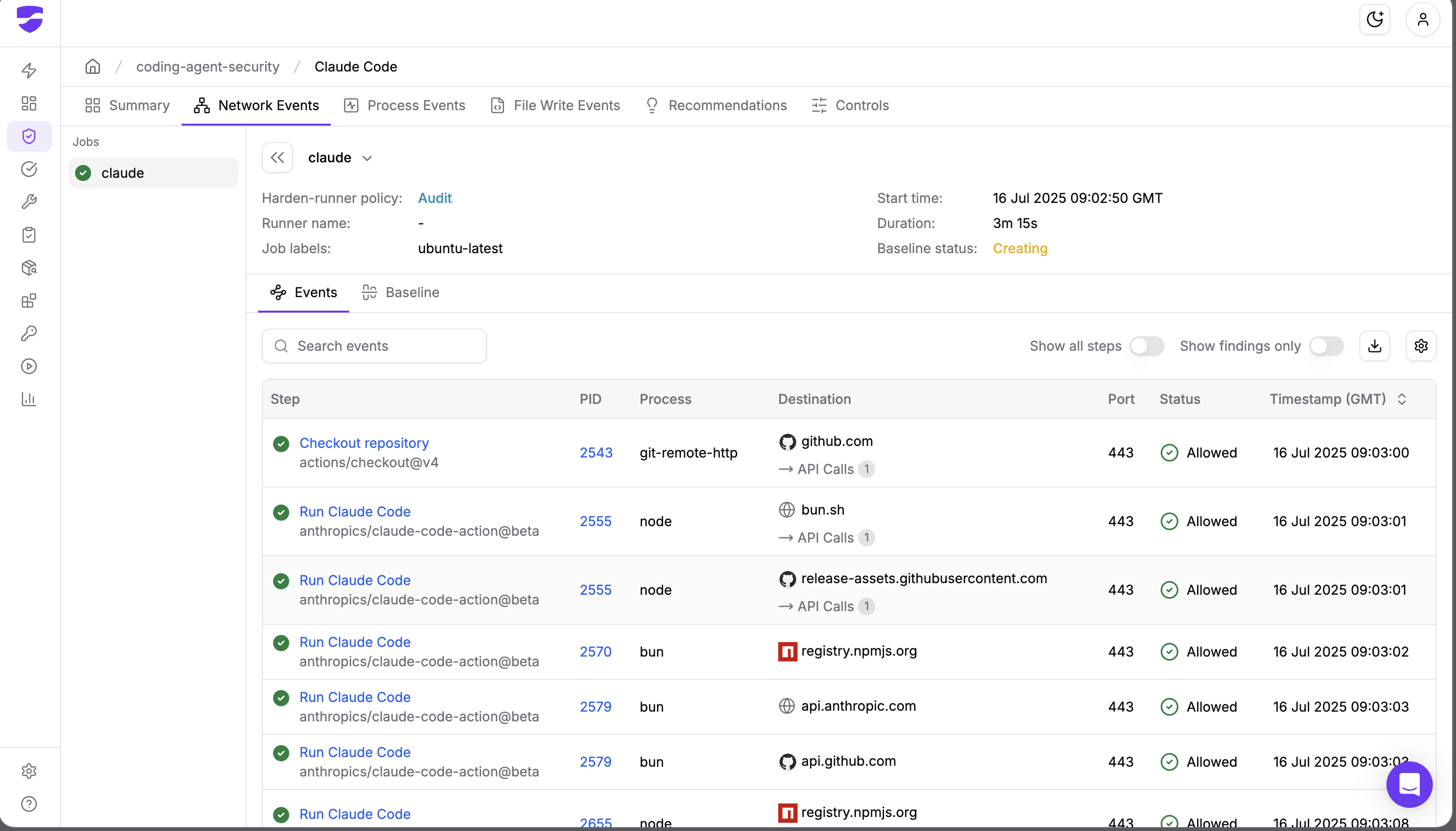

Stage 3: CI/CD Pipeline Security (Harden-Runner)

AI coding agents operating in CI/CD pipelines have privileged access to production secrets and infrastructure. Securing this stage requires purpose-built runtime monitoring that understands CI/CD context, something traditional EDR fundamentally cannot provide.

- Runtime Visibility: Harden-Runner monitors every network call, process execution, and file event during a GitHub Actions workflow run, correlating each event with the specific step that triggered it. AI coding agents become fully observable rather than black boxes.

- Behavioral Baseline Detection: Harden-Runner automatically establishes normal network behavior for every job. When a job contacts endpoints outside its baseline — for example, a compromised dependency phoning home — the anomaly is flagged immediately. This catches novel attacks that signature-based tools miss.

- Egress Policy Enforcement: In block mode, unauthorized outbound traffic is blocked at the DNS, HTTPS, and network layers — preventing exfiltration of CI/CD credentials and source code. This is especially critical for Claude Code, which operates in GitHub Actions without any built-in network firewall.

- Process Attribution: Trace every network call back to the exact process (with PID) that made it — essential context for incident investigation that generic endpoint tools can't provide in CI/CD environments.

- Integration with AI coding agents is straightforward: We've published step-by-step guides for integrating Harden-Runner with the major CI/CD coding agents:

Why End-to-End Coverage Matters

Modern supply chain attacks don't respect stage boundaries. Shai-Hulud compromised npm packages, stole credentials from developer machines, and used those credentials to publish malicious releases bypassing CI/CD controls. The NX compromise simultaneously hit the npm registry and VSCode extensions. Trust Wallet showed how a Stage 1 credential theft cascaded into a malicious production release.

Point solutions covering a single stage leave the other two wide open. StepSecurity is the only platform providing unified visibility and enforcement across all three stages.

.png)

Conclusion

Enterprise adoption of vibe coding and agentic software development is accelerating. The attack surface is growing in lockstep and modern supply chain attacks are multi-stage operations that cross boundaries between developer machines, package registries, and CI/CD pipelines.

Security solutions focused on a single stage leave critical gaps. Defending against these attacks requires controls at every stage where AI coding agents operate: the development environment where vibe coding begins, the code repository where AI-generated dependencies enter the codebase, and the CI/CD pipeline where production secrets are at stake.

StepSecurity provides that end-to-end defense, battle-tested against real-world attacks, trusted by leading enterprises and open-source projects, built for the agentic development era.

Interested in securing your agentic software development lifecycle? Request a demo or start free today.

.png)